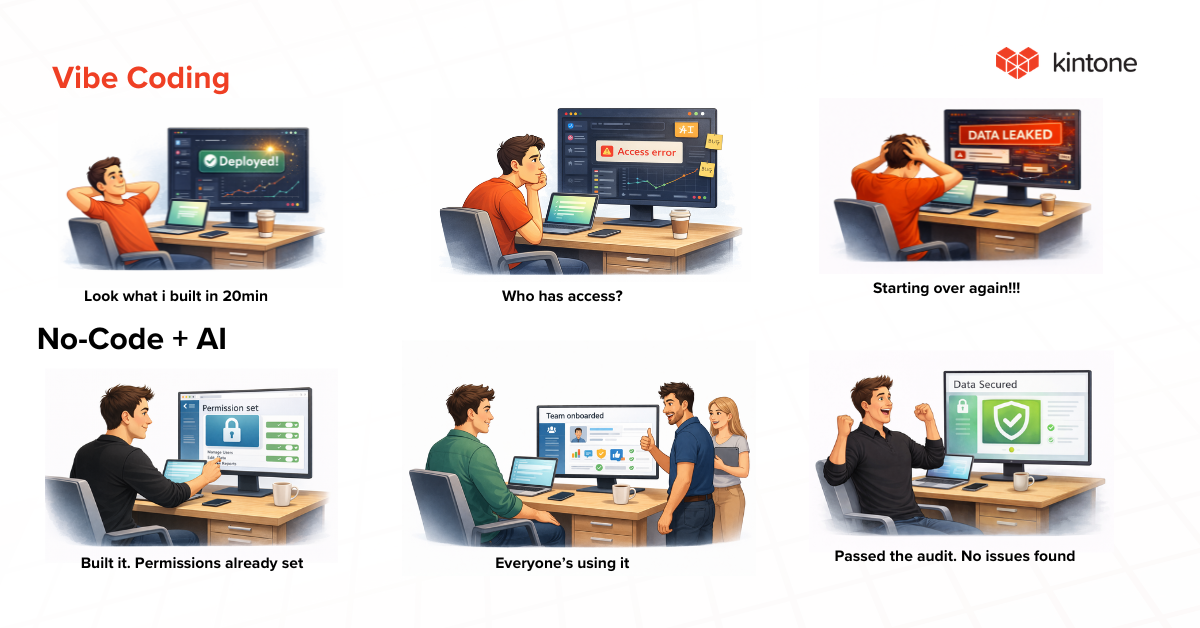

Vibe coding has recently taken over the conversation about how software gets built. The concept is simple enough. You describe what you want in plain language, let AI generate the code, and ship it without development experience or a wait for IT. For rapid prototyping and proof-of-concept work, AI-powered code generation can compress weeks of development into hours.

But over the past six months, vibe-coded applications have made headlines for the wrong reasons. Security firms have documented thousands of vulnerabilities across publicly deployed apps. A social networking platform built entirely through vibe coding was breached, exposing 1.5 million authentication tokens and 35,000 email addresses. And researchers continue to raise alarms about what happens when AI-generated code reaches production without review.

Meanwhile, the no-code market is growing faster than ever. The global low-code/no-code market reached $48.91 billion in 2026, with projections pointing toward $376 billion by 2034. Organizations are building at speed, and the platforms combining that speed with structure are the ones gaining traction.

What Vibe Coding Gets Right and Where It Falls Apart

The appeal of vibe coding is that developers and non-developers alike can describe a feature in conversational language and receive functioning output in minutes. For testing ideas and building throwaway prototypes, it delivers genuine value.

But functioning and secure are two very different things. A Carnegie Mellon study found that 61% of AI-generated code is functionally correct, while only 10.5% meets security standards. Veracode's 2025 GenAI Code Security Report found that 45% of AI-generated code introduces security vulnerabilities, with large language models choosing insecure methods nearly half the time when given the option.

The pattern across these incidents is consistent. Vibe-coded apps look like they work, but under the surface, they often lack the most basic security controls. Input validation, proper authentication logic, role-based access, and encryption are frequently absent or incorrectly implemented.

A user who built an entire social networking site without writing a single line of code ended up with a misconfigured database that exposed over a million records. A researcher found 16 vulnerabilities in a single application on a popular vibe coding platform, six of them rated critical, leaking the data of more than 18,000 users.

The issue is structural because AI coding tools optimize for functionality and rarely consider safety. When the person using the tool lacks the expertise to review what was generated, vulnerabilities ship to production. A separate analysis of over 5,600 publicly deployed vibe-coded applications identified more than 2,000 vulnerabilities, 400+ exposed secrets, and 175 instances of personally identifiable information, including medical records.

How Built-In Governance Changes the Equation

No-code platforms provide structured environments where teams build applications within defined guardrails, and that distinction matters now more than it did a year ago.

When a team builds an application on a governed no-code platform, security controls come built in. Role-based permissions determine who can see, edit, and share data. Audit trails log every change automatically. Workflow management tools enforce approval steps and clear accountability. Encryption protects data in transit and at rest.

With vibe coding, every one of those controls has to be specified, reviewed, and verified by the person using the tool. And as the data shows, that review step is where things consistently break down.

The core distinction is about where security responsibility lives. Vibe coding shifts that responsibility to the individual user. Governed no-code platforms absorb that responsibility into the platform itself.

Where AI and No-Code Converge

The more interesting development in 2026 is how AI is making no-code platforms smarter and faster without sacrificing the governance that makes them reliable. Across the industry, no-code vendors are embedding conversational AI directly into their platforms, allowing users to describe business problems in natural language and receive structured, governed applications in return. The output is an application inside a managed environment, with permissions, data controls, and audit capabilities already in place.

This approach is fundamentally different from vibe coding because the AI operates within the constraints of the platform's governance framework. The code is never exposed, and the security architecture is never left to the user to configure manually. The platform handles the structure, and the user focuses on the business logic.

Kintone has moved in this direction with Kintone AI Lab, a feature that lets users design applications and manage workflows using conversational chat commands. A user can describe a business problem in a few sentences, and the platform generates a structured application complete with permissions, data fields, and workflow steps.

All of it sits inside a SOC 2 certified platform with granular permissions and built-in audit trails. User data is never used to train external AI models, and the Kintone Trust Center provides full transparency into the platform's security infrastructure.

For teams building internal tools, customer databases, workflow systems, and operational processes, this combination of AI-powered speed and platform-level governance is where the industry is heading.

Five Questions to Ask Before Choosing a Platform in 2026

The vibe coding conversation has done one valuable thing in that it has forced organizations to think more carefully about how software gets built and who is responsible when something goes wrong. Teams evaluating how to build workflows and internal tools in 2026 are asking sharper questions than they were a year ago.

-

Does the platform produce governed, auditable applications, or does it generate raw code that nobody on the team can review?

-

Are security controls like permissions, encryption, and access restrictions built in by default, or do they depend on the user knowing to ask for them?

-

Can business teams build and modify applications without relying on IT or external developers?

-

Does the platform maintain a full audit trail of changes, approvals, and data access?

-

Is the platform certified to recognized security standards like SOC 2, and does it provide transparency into its compliance practices?

These questions apply to any platform. The organizations asking them are the ones building on solid ground while others are cleaning up after the next headline.

Kintone is built on this foundation. With Kintone AI Lab, teams can create custom workflow applications through conversational AI, all inside a SOC 2 certified platform with granular permissions, audit trails, and enterprise-grade security. If you are evaluating how to build smarter without taking on unnecessary risk, start a free trial and see the difference for yourself.

About the Author

Agbaje Feyisayo is a dynamic content marketing expert with over 10 years of experience writing high-converting content across SaaS, technology, AI, and digital transformation. With a strong background in product marketing and storytelling, Agbaje helps top-tier companies turn complex ideas into actionable content that attracts and converts leads.